The 754 specification is a very widely adopted specification that describes how floating-point numbers should be stored in a binary computer. IEEE is the Institute of Electrical and Electronics Engineers, an international body that, among other things, determines standards for computer software and hardware. Microsoft Excel was designed around the IEEE 754 specification to determine how it stores and calculates floating-point numbers. This may affect the results of some numbers or formulas because of rounding or data truncation. Should be easy to find out when sending a known float value.This article discusses how Microsoft Excel stores and calculates floating-point numbers. So byte order may be different for sender and receiver. So while the 4 bytes of a float are defined by IEEE 754, the byte order is defined by the computer platform. Or as the transfer between different platforms requires. Just as the sending platform provides the bytes, you could use: ((byte*)&x)= 0x3F The example code I posted handles each byte seperately, so its easy to mix the byte order as needed. So perhaps when transferring bytes from one system to another system which are providing a different endianess, the bytes possibly needs to be changed: The byte order may be the same or the byte order may be reversed, or the highword may be mixed with the loword. Aren't you putting the bytes together in the wrong order ?

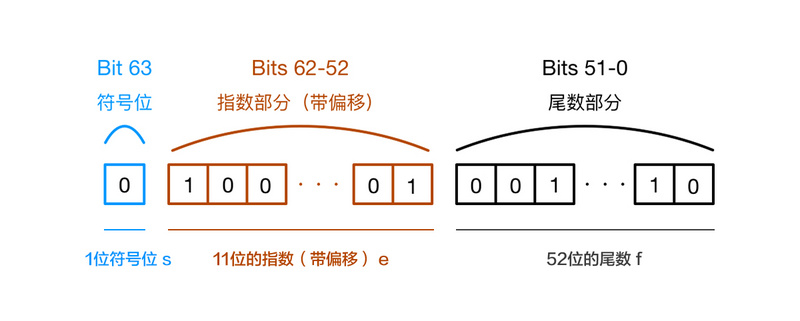

Your method will probably create the wrong result. Read this Single-precision floating-point format - Wikipedia Then you multiply 2**-1 to get the answer. You add a one to the most significant bit of this. The next 8 bits are the exponent 0111 1110 = 7E hex = 126 decimal from which you subtract 127 to get -1 Hence the "IEEE 754 32 bit floating point" designator in the thread title. The issue is that the 32 bit packet I am receiving from the target device is not directly converted to a float, or at least the float i need. I will try the code you offered ( thanks ) but on the surface it looks to me as though it will simply generate 1060253247.000xxxxxx. I can hope with enough trial and error i can probably figure it out, but as this is a standard in the computer world, i was hoping not to have to re-invent the wheel and that more savvy programmers had already tackled the issue. Parsing the Data is not terribly hard with bit shift commands, however applying the proper maths to obtain the correct result for any given 32 bit string is something completely different, witch is why i posted. I have researched a little and there are a few videos/web pages on how to do the conversion on paper, using multiple binary and base 10 calculations to calculate the correct digits and decimal place, however i have yet to find anything on how to do this in a program. The sign bit is easy, 1 is a negative and 0 is a positive, the tricky part is the exponent and the Mantissa, these two determine the number as well as the decimal place, that is why 3f322e3f (IEE 754) is 0.696018, not 106025347. I am just now leaning about the "IEEE 754" standard, so please forgive me if i get this wrong, but with 32bit floating point numbers, the MSB (bit 31) is a sign bit indicating a positive or negative number, the next 7 bits are the exponent, and the remaining 24 bits are what is called the Mantissa. To get the intended 0.6960186 result i need. To illistrate : the example packet (brought into the arduino pro-mini as an unsigned long)ģf322e3f(hex) directly translates to 1060253247(DEC), it is clearly not a float and is clearly not simply dividable You do realise, that all numbers in the computer are binary numbers ?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed